- Published on

How to Measure Developer Productivity Without the Drama

- Authors

- Name

- Gabriel

- @gabriel__xyz

For years, engineering leaders have been stuck trying to measure developer productivity with simplistic, output-based numbers like lines of code (LOC) or story points completed. These metrics feel concrete, but they usually cause more problems than they solve. They give you a warped view of what actually drives engineering success.

The real answer? Stop focusing on individual output and start looking at the health and flow of your entire software delivery system.

Why Traditional Productivity Metrics Are Broken

The biggest issue with old-school metrics is they measure activity, not impact. Think about it: one developer could churn out 1,000 lines of inefficient, buggy code, while another solves the same problem with 50 elegant lines. Who was more productive? Counting lines of code would reward the wrong behavior entirely.

This approach ends up incentivizing all the wrong things:

* **Gaming the System:** Developers might split a simple task into multiple commits to inflate their numbers or add pointless code just to boost their LOC count.

* **Ignoring Collaboration:** Things that *actually* make a team better—like mentoring junior devs, doing thorough code reviews, or improving documentation—don't show up. You're effectively punishing teamwork.

* **Increasing Burnout:** When people feel judged by arbitrary numbers, it creates toxic competition and immense pressure. This is a fast track to burnout and sinking morale.

Moving Beyond Individual Output

The core problem is treating software development like an assembly line, where every worker's output is easily counted. But modern software engineering is a creative, collaborative, and messy process. A developer's true value isn't just in the code they write, but in how they contribute to the team's overall speed and the system's stability.

The most productive developers aren't always the ones closing the most tickets. They're the ones who make the entire team faster by unblocking others, improving processes, and writing clean, maintainable code that prevents future headaches.

Instead of getting bogged down by misleading metrics, focusing on process improvements like solid code review best practices will tell you way more about your team's velocity and code quality. This requires a new way of thinking—one that puts system health above individual heroics.

A Healthier Approach to Measurement

To truly understand and improve how your team delivers value, you need frameworks that look at the entire development lifecycle. This is where modern methodologies like DORA (DevOps Research and Assessment) and the SPACE framework come into play.

DORA metrics zero in on the speed and stability of your delivery pipeline, giving you a high-level view of system efficiency. The SPACE framework complements this perfectly by measuring the human side of development, including things like developer satisfaction, well-being, and collaboration.

Together, they provide a balanced, holistic picture of productivity that actually connects team health to business outcomes.

When you want to understand your engineering team's performance, looking at individual activity is a dead end. Instead, you need to measure the health of the entire system. That's where DORA (DevOps Research and Assessment) metrics come in.

Think of DORA as a diagnostic dashboard for your whole software delivery pipeline, not a report card for a single developer. It gives you a powerful, industry-validated way to see how fast and stable your engineering process really is.

By tracking the right things, you shift the conversation from "Who's working the hardest?" to "Where are our biggest bottlenecks holding us back?"

The Four Pillars of DORA

So, how do you actually measure developer productivity without falling into old traps? You start with these four key metrics, which are broken down into two simple categories: speed (or throughput) and stability.

Speed Metrics

* **Deployment Frequency:** How often do you successfully get code into production? Top-tier teams push small, frequent changes, which lowers risk and gets them feedback much faster.

* **Lead Time for Changes:** How long does it take for a committed change to actually go live? This metric tracks the entire journey from commit to deployment, shining a light on delays in code review, testing, and release cycles.

Stability Metrics

* **Change Failure Rate:** When you deploy, what percentage of those changes cause a problem? This could be a degraded service or something that needs a hotfix or rollback right away. It's a direct reflection of your quality and how reliable your process is.

* **Mean Time to Recovery (MTTR):** After a production failure, how long does it take to get things back up and running? This shows how resilient your system is and how quickly your team can respond when things go wrong.

The real magic of DORA metrics is that they measure outcomes, not output. They create a shared language that engineering, product, and business folks can all use to talk about the health of the delivery process and how it directly impacts customers.

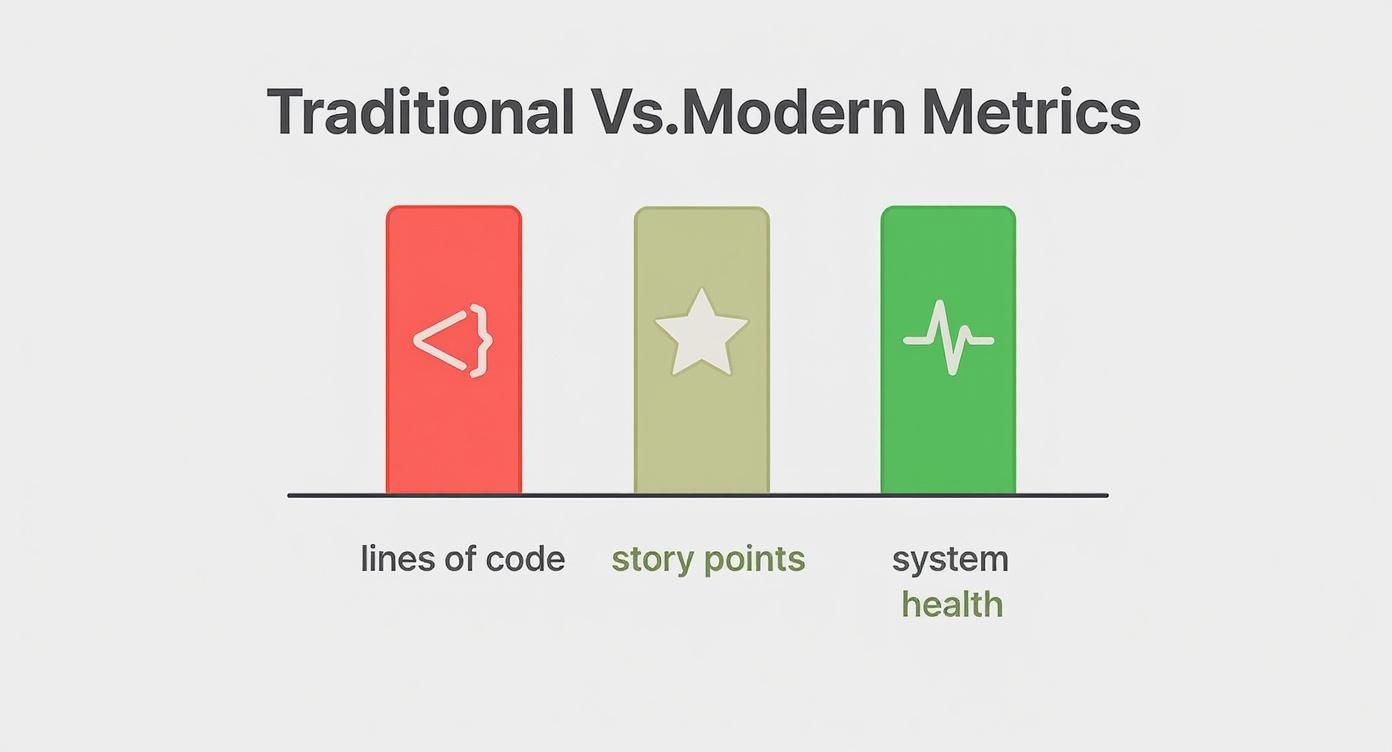

This infographic nails the shift in thinking. It moves away from outdated, easily gamed metrics like "Lines of Code" and toward modern, system-level indicators like DORA.

As you can see, the focus is no longer on individual activity but on the overall performance and health of the entire engineering system.

What Good Looks Like Elite vs Low Performers

To make these metrics useful, you need something to aim for. The annual State of DevOps Report gives us a clear picture of what separates the best engineering teams from the rest.

According to recent findings, elite teams deploy code an average of 973 times per year (that's multiple times a day!), while low-performing teams might deploy less than once a year. The lead time for changes for top teams is often less than an hour; for low performers, it can stretch to over a month.

Elite teams also keep their change failure rate under 15% and can bounce back from incidents in less than an hour. Meanwhile, low performers might take more than a week to recover. You can dig into more of these benchmarks in the 2023 State of DevOps Report.

To put it in perspective, here’s a direct comparison of what those different performance levels look like.

DORA Metrics Elite vs Low Performers

The gap between elite and low-performing teams is staggering. This table breaks down the key differences based on industry benchmarks, giving you a tangible sense of what's possible.

| Metric | Elite Performers | Low Performers |

|---|---|---|

| Deployment Frequency | On-demand (multiple times per day) | Between once per month and once every 6 months |

| Lead Time for Changes | Less than one hour | Between one month and six months |

| Change Failure Rate | 0-15% | 46-60% |

| Mean Time to Recovery | Less than one hour | More than one week |

Seeing these numbers side-by-side provides some concrete goals. If your lead time is currently measured in weeks, setting a goal to get it down to days is a huge—and totally achievable—first step.

Getting Started with Tracking DORA Metrics

You don’t need to buy expensive, specialized tools to get started. You can begin tracking DORA metrics right now with the systems you already have, like Git, Jira, and your CI/CD platform. For example, you can calculate Deployment Frequency simply by counting the deployments in your CI/CD logs.

Improving your system's health and DORA metrics often comes down to streamlining your pipeline. Understanding the various workflow automation benefits is a great place to start, as automating manual steps has a direct, positive impact on both Lead Time for Changes and Deployment Frequency.

For a deeper dive into actionable steps, check out our guide on https://blog.pullnotifier.com/blog/7-proven-strategies-to-reduce-cycle-time-in-2025.

As your team gets more comfortable with these metrics, you can look into dedicated engineering intelligence platforms that automate data collection and offer deeper insights. The main goal is to make these metrics visible and use them to start conversations about making system-wide improvements.

Understand the Human Element with the SPACE Framework

While DORA metrics give you a fantastic, high-level view of your system's health, they don't paint the whole picture. Let's say your deployment frequency suddenly tanks. Is it because the team is wrestling with a complex new feature, a broken CI pipeline, or just plain burnout? DORA tells you what is happening; the SPACE framework helps you understand why.

Introduced back in 2021 by researchers at GitHub and Microsoft, SPACE offers a more holistic way to look at developer productivity. It pushes beyond just speed and stability to bring in the crucial human elements that really drive great software development. This isn't just theory—a 2022 survey found that teams scoring high on SPACE metrics were 30% more likely to report high job satisfaction and 25% more likely to ship projects on time. You can dig into the findings and see how they're measuring developer productivity.

The framework is built around five key dimensions, each giving you a different lens to view your team’s effectiveness.

This diagram from the original research paper shows how these five dimensions aren't isolated but are all interconnected, giving you that complete, holistic view of what productivity actually looks like.

It’s a great visual reminder that productivity isn't a single number but a mix of individual attitudes, system performance, and team dynamics working together.

Satisfaction and Well-being

This one is all about how fulfilled, happy, and healthy your developers feel. It’s simple: engaged developers are more creative, collaborative, and much more likely to stick around. High turnover and burnout are productivity’s kryptonite.

How to measure it:

* **Pulse Surveys:** Keep it simple and regular. Weekly or bi-weekly surveys with questions like, "How satisfied were you with your work this week?" or "Did you have the tools you needed to succeed?" can give you a real-time pulse.

* **Retention Rates:** Keep an eye on your team's voluntary turnover rate. A low number here is a powerful sign of a positive and supportive environment.

* **1-on-1 Feedback:** Use your one-on-one meetings to have real conversations. Ask direct questions about workload, stress, and overall job satisfaction.

Performance

This is the dimension most people think of first, focusing on the outcomes of a developer's work. But unlike old-school metrics that just count widgets, SPACE frames performance in terms of quality and impact.

How to measure it:

* **Quality Metrics:** Track things like the number of bugs that slip into production or the rate of customer-reported issues.

* **Impact on Business Goals:** Draw a line from engineering work to business outcomes. Did that new feature actually lead to a measurable bump in user engagement or revenue?

* **Code Review Feedback:** Look at the quality and reliability of the code itself, as judged by peers during code reviews.

Activity

Activity measures the actions and outputs of the development process. It can be tempting to fall back into the trap of counting commits or lines of code, but the real value is in looking at a range of activities that signal a healthy, flowing process.

Important Takeaway: The goal here isn't to micromanage individual activity for performance reviews. It's about spotting team-level patterns. A sudden drop in commits across the entire team might point to a systemic blocker, like a problem with the local development environment.

How to measure it:

* **Commit Volume and Frequency:** Track the number of commits and pull requests over time to get a feel for the team's natural rhythm.

* **Code Review Volume:** Are code reviews getting done? Monitoring how many are completed can tell you a lot about team collaboration.

* **Design and Documentation:** Count how many design documents are created or updated. This is work that often goes unnoticed but is critical for long-term health.

Communication and Collaboration

Software development is a team sport, plain and simple. This dimension examines how well information flows between team members and across the wider organization. You can't build complex systems without strong collaboration.

How to measure it:

* **Onboarding Time:** How long does it take for a new developer to get up to speed and push their first meaningful contribution? A shorter time is a great sign of strong documentation and team support.

* **Code Review Quality:** Don't just count reviews, look at their quality. Are comments on pull requests constructive and timely?

* **Knowledge Sharing:** Track metrics like the number of internal tech talks given, documentation pages created, or active discussions in team channels.

Efficiency and Flow

This dimension is all about removing friction and protecting focused work time. It measures how easily developers can move through their tasks without getting bogged down by interruptions or frustrating blockers. Improving this is a direct line to a better developer experience.

How to measure it:

* **Wait Time:** How long does code sit around waiting for a review? How long do CI builds take? Long wait times are classic productivity killers.

* **Context Switching:** Survey your developers. Ask them how often their flow state gets shattered by meetings, urgent pings, or other notifications.

* **Developer Feedback:** The best way to find friction is to ask your team directly. "What's the most frustrating part of your workflow?" and "Where do you lose the most time each day?" will give you a goldmine of actionable insights.

For more on this, check out our guide on how to improve developer experience, as it’s deeply connected to boosting efficiency and flow.

By weaving together these five dimensions, you move beyond simple output metrics and get a much richer, more accurate picture of what's really happening on your team.

How to Gather Feedback That Actually Helps

Frameworks like DORA and SPACE are a great starting point, but they only show you what's happening, not why. A dashboard might flag a drop in deployment frequency, but it won’t tell you that the CI/CD pipeline has become painfully slow or that staging environments are constantly down.

That’s where talking to your team comes in. The real "aha!" moments—the insights that actually drive improvement—come from direct conversations and smart surveys. Without that human context, you're just staring at charts instead of leading a team.

Running Surveys That People Actually Answer

Let’s be honest: most surveys are terrible. They’re too long, too generic, and too infrequent to be useful. If you want feedback people will actually give, you need to make it quick, consistent, and focused on uncovering friction.

Forget the massive annual survey. Try a lightweight monthly or quarterly pulse check instead. It creates a continuous feedback loop and shows the team you’re actually listening.

Here are a few open-ended questions I’ve found that get straight to the heart of the developer experience:

* What was the most frustrating part of your workflow this past month?

* Where did you lose the most time to unexpected blockers or delays?

* Is there a tool or process that consistently slows you down?

* On a scale of 1-10, how would you rate your ability to get into a state of "flow"?

These questions push past generic satisfaction scores to pinpoint specific, actionable problems in your engineering system.

Making One-on-Ones a Source of Truth

Your one-on-one meetings are the perfect place to connect the dots between the metrics you’re seeing and what your developers are actually experiencing. But for these conversations to work, you need psychological safety. No one will tell you what’s really going on if they think it’ll come back to haunt them in a performance review.

Frame these discussions around improving the system, not evaluating the individual.

Instead of putting someone on the spot, try an opener like, "I noticed our lead time for changes went up last sprint. From your perspective, what were some of the biggest hurdles?" This simple shift turns a potentially awkward conversation into a collaborative problem-solving session. It reinforces that the goal isn't to assign blame but to make everyone's lives easier.

The most valuable feedback often comes from asking about frustrations, not successes. When a developer feels safe enough to tell you that the CI/CD pipeline is painfully slow or that code reviews are getting stuck for days, you've struck gold. That's a concrete problem you can actually solve.

This blend of hard data and human feedback isn't just a nice-to-have anymore. A 2023 report from DX Analytics found that 94% of the 17 major tech companies they surveyed now use qualitative metrics alongside hard data. For example, Intercom and Peloton found that teams with higher engagement were 35% more likely to hit deadlines and produced 20% fewer bugs.

From Feedback to Actionable Change

Gathering feedback is the easy part. The real test is what you do with it. If developers point out problems and see nothing happen, they’ll stop bothering to share their insights. You have to close the loop.

Let's say a recurring theme pops up—multiple developers mention that staging environments are flaky. Here's how to turn that feedback into action.

- Acknowledge and Validate: In the next team meeting, address it head-on. "I've heard from several of you that the staging environment is a major bottleneck. Thank you for raising this—it's a high priority for us to fix."

- Assign Ownership: Don't let it linger as a vague problem. Create a specific ticket, assign a clear owner, and make it visible. This signals you're taking the issue seriously.

- Communicate Progress: Keep the team in the loop. Even small updates show that their feedback didn't disappear into a black hole.

This cycle builds trust and reinforces a culture where improvement is a shared responsibility. It works for process issues, too. If you hear that code reviews are inconsistent, it’s a perfect opportunity to refine team practices. This ultimate guide to constructive feedback in code reviews has some great, practical tips for improving that critical part of the workflow.

By combining system-level metrics with genuine, human-centric feedback, you create a powerful engine for improvement. You won't just build a more efficient engineering organization—you'll build a happier, more engaged team.

Once you've wrapped your head around modern frameworks like DORA and SPACE, the next big question is: how do you actually measure this stuff? That’s where the tooling comes in. The market is packed with options, and picking the right one is key. Your goal should be to find a tool that shines a light on system-level bottlenecks and gets people talking, not one that just enables micromanagement.

The tools out there generally fit into a few buckets. Figuring out which category makes sense for you will help you find a solution that fits your team's size, maturity, and—most importantly—culture.

Engineering Intelligence Platforms

These are the big guns, the platforms designed to give you a bird's-eye view of your entire software delivery lifecycle. They plug directly into the tools you’re already using—Git, CI/CD, project management software—and automate the heavy lifting of collecting DORA and SPACE metrics.

* **Who they're for:** Best suited for mid-to-large engineering orgs that need a single source of truth for delivery performance and developer experience data.

* **Key players:** You'll see names like **[LinearB](https://linearb.io/)**, **[Jellyfish](https://jellyfish.co/)**, and **[Velocity](https://velocity.codeclimate.com/)** pop up a lot. They’re fantastic for visualizing trends, spotting friction in your processes, and connecting the dots between engineering work and business goals.

* **What to watch for:** These platforms are powerful, but they aren't cheap. Even more important is the sheer volume of data they produce. If you don't have a clear plan for how to use it, you can easily create "analysis paralysis." Or worse, you might be tempted to use the data to rank individuals, which is a surefire way to kill trust.

The best way to use these platforms is to look for team-level patterns. For example, if a team’s Cycle Time is steadily creeping up, it’s a signal to ask, "What systemic issue is slowing us down?" not "Who's the slowest developer on the team?"

Developer Experience (DX) Platforms

While the big intelligence platforms often focus on delivery metrics, DX platforms zoom in on the more qualitative side of the SPACE framework. Their entire reason for being is to measure and improve the day-to-day life of your developers.

Take DX, for example. It specializes in gathering feedback through targeted surveys and analyzing developer environments to pinpoint what’s causing friction. Is it a painfully slow CI build? A clunky local setup process? Or just way too many meetings breaking up everyone's flow state? These tools help you answer the most important question of all: "Is it easy and enjoyable to get work done around here?"

Leveraging Your Existing Toolset

Here’s the thing: you don't always need a shiny new platform to get started. Many teams can get surprisingly far just by pulling data from the tools they already use every single day.

* **Jira/Asana:** Your project management tool is a goldmine. You can manually calculate metrics like **Lead Time for Changes** by just tracking how long a ticket takes to get from "In Progress" to "Done."

* **GitHub/GitLab:** Your version control system is full of useful data. You can track **Deployment Frequency** by counting merges to your main branch and even get a feel for collaboration patterns by looking at pull request metrics.

* **Slack:** Never underestimate the power of a simple, lightweight survey. A tool like [Polly](https://www.polly.ai/) or even a quick custom bot can run weekly pulse checks to see how the team is feeling.

This DIY approach is a fantastic, low-cost way to dip your toes in the water. It forces you to be deliberate about what you're measuring and helps you build a solid understanding of the metrics before you shell out for a more automated solution. The key is to start small. Just pick one or two metrics that speak to a pain point you already know you have.

Answering the Tough Questions About Productivity Metrics

Even with a solid framework in mind, switching to a new way of measuring productivity can feel daunting. Most engineering leaders run into the same handful of tricky questions. Let's tackle them head-on.

How Do I Start Without My Team Feeling Spied On?

This is the big one, and it all comes down to transparency and framing. Don't roll out a massive dashboard and say, "Here are your new numbers." That's a recipe for distrust.

Instead, start small. Pick one or two team-level DORA metrics, like Lead Time for Changes, and introduce it as a tool for spotting system-wide friction. The conversation should sound like this: "Hey team, I think our review process is slowing us down. Let's track Lead Time to see if we can find the bottleneck together and make everyone's lives easier."

Get your team involved from the get-go. Ask them what metrics would actually help them understand their own challenges. When you combine this hard data with something softer, like a quick monthly survey asking "What's the most frustrating part of your workflow?", it proves you're listening. The goal is to make this a collaborative effort to improve the system, not a top-down mandate.

The moment your team believes metrics are being used for them and not against them, you’ve won. Trust is everything. The objective is to foster a culture of shared ownership over the engineering system's health.

Are Metrics Like Story Points or Lines of Code Useless?

Not completely, but their usefulness is incredibly narrow. Think of story points as a tool for one thing and one thing only: short-term planning for a single team. They should never, ever be used to compare developers or different teams. One team's "5-point story" is completely different from another's, and that's okay.

Lines of code (LOC) is an even more dangerous metric. On its own, it tells you almost nothing about value or quality. A developer could delete 500 lines of legacy code and deliver more value than someone who wrote 1,000 new lines. That said, a sudden, organization-wide drop in LOC might point to a broken CI/CD pipeline, so it can be a faint background signal. Just don't ever tie it to performance reviews.

How Do DORA and SPACE Fit Together?

They're two sides of the same coin, giving you a complete picture of your engineering health.

* **DORA** is your system's EKG. It tells you *what* is happening with your delivery process—the raw output of speed and stability.

* **SPACE** is the conversation with the patient. It helps you understand the *why* behind those numbers by looking at the human side of things.

Imagine your Deployment Frequency (DORA) takes a nosedive. The SPACE framework is how you diagnose the cause. Is it because developers are burned out (Satisfaction)? Is a new tool creating friction (Efficiency & Flow)? Did a re-org disrupt communication (Collaboration)? Using them together connects system performance directly to team well-being.

If I Can Only Track One Metric, Where Should I Start?

While there's no magic bullet, Lead Time for Changes from the DORA framework is the best place to start. It measures the entire journey from a code commit to that code running in production. Because it touches every part of your delivery pipeline, it's an incredibly powerful indicator.

Think about it: to improve your lead time, you have to fix your slow code reviews, automate your testing, and streamline your deployment process. It's a high-leverage metric that forces you to make improvements across the board. If you're going to pick one, make it that one.

Stop letting pull requests get stuck in review limbo. PullNotifier integrates directly with Slack to provide real-time, consolidated PR updates, cutting through the notification noise and reducing review delays by up to 90%. Learn how PullNotifier can streamline your team’s workflow.